Building on a robust metadata and asset system, a multithreaded Alembic exporter would export all the animation data from Maya while preserving asset data like material names. The exporter runs a FIFO thread queue in the background, allowing the system to take full advantage of the available cores. Basically everything an animator would interact with was wrapped into an asset specification for automatic processing.

Pipeline Architecture

Python package backend

A custom python package as backend to automate and work with assets programmatically (think animation exporters, automatic material assignment or AOV setup)

Asset based frontend

An asset based front end that defines interaction possibilities with the artists or the python codebase. Think Maya references with custom metadata nodes or Houdini Python HDAs.

Pipeline under Version Control

The complete pipeline is under version control with git. An online repository on github made it easy for external project members to setup up the pipeline or develop remotely.

Online Documentation

Tutorials, explanations, FAQs and the API documentation for the skol python package were accessable from the online documentation built with Sphinx.

Standalone Tools

Little handy helper tools that would set the environment and start our pipeline DCCs, automate Photoshop Droplet processing or expose a GUI to convert images to Houdinis optimized .rat texture format (BatchRAT Converter done by Johannes Franz).

Standards

From animation to lighting and FX, from rendering to comp. Exporters and setup scripts ensure consistent standards for the exchange between departments during production. From Abc exporters to automatic render, path and AOV setup. Those consistent standards allow for an efficient layer of programmatic processing on top and minimize communication overhead.

Details

Multithreaded Alembic Export

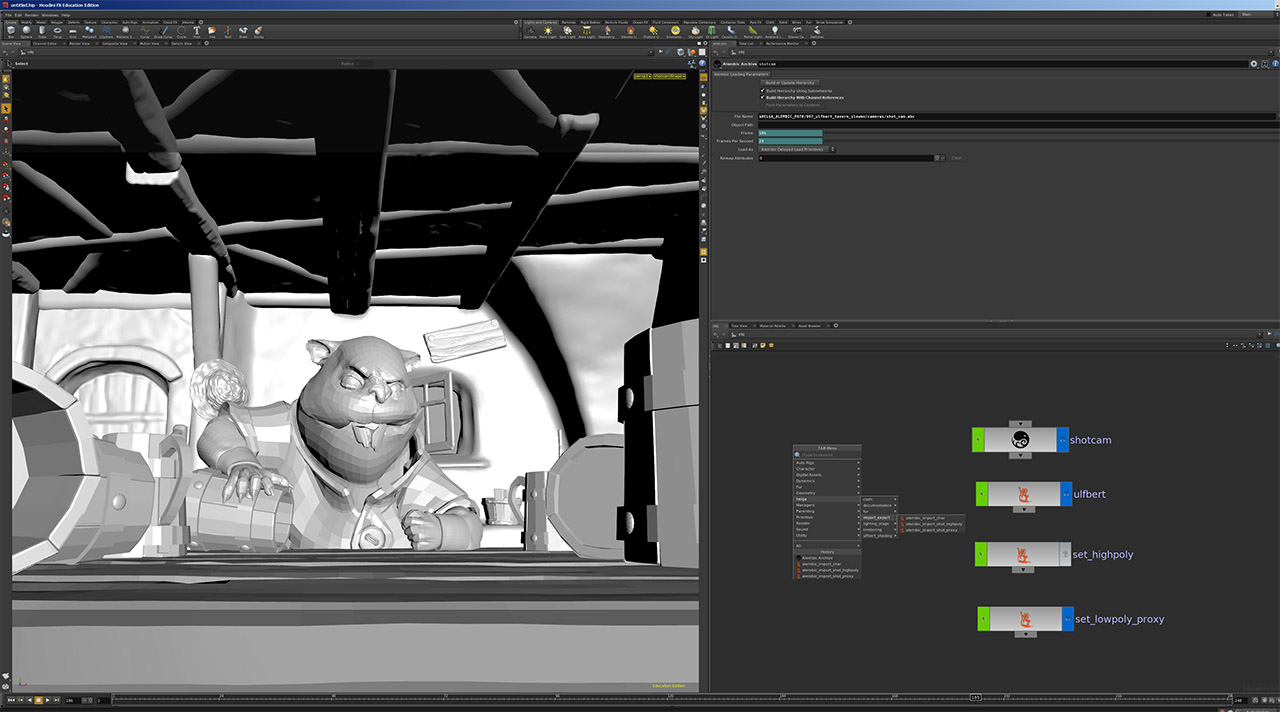

Customized Alembic Import with Python HDAs

Utilizing the custom metadata from the asset caches we would have a customized Alembic import into Houdini via Python HDAs that served as an interface to the skol package. Benefits from our custom alembic import included automatic grouping and material assignment, as well as bypassing of Alembic XForm nodes and a totally flat hierarchy amongst others.

Python startup wrapper

Small Python standalone tool as entry point to our Pipeline. Sets the environment, starts our DCCs and exposes some convenience functionality. The tool was usually used in Non-GUI mode via shortcuts and command line variables to do all the adjustment.

Automatic Render/Path/AOV setup

Mantra customization scripts ensured a correct initial render setup as a common base for further tweaking as well as correct path settings. The definition of global AOV standards and a central control for those, just like Vray RenderElements, helped to ease render and compositing workflows.

AOV HDAs were integrated into each shader and a Python HDA would globally adjust values for the passes. Through this, passes like rgb mattes wouldn’t need any setup time and came for free. At the end of the video is a breakdown of our standard AOVs.

Online Documentation

Tutorials, explanations, FAQs and the API documentation for the skol python package were accessable from the online documentation built with Sphinx. The documentation was linked with the github pipeline repo, so whenever an update was pushed, the docs would automatically rebuild.

Photogrammetry

The tavern set was first built as a real miniature set by our director Marco Hakenjos and then digitized via Photogrammetry with Agisoft Photoscan. For this purpose we built a rig with a Canon 5D, a turntable and an Arduino. A PyQt tool, done by Vincent Uhlmann, would do the communication with the Arduino board and trigger the shooting. A Python service done by me would watch the photo folder and use Nuke to start a new mask render as soon as a new photo dropped in. All in all a fully automatic pipeline from shooting to solvable data……very homegrown, but feature complete.